Cross-Validation

Cross-validation is a resampling technique for estimating model performance. It's essential for model selection, hyperparameter tuning, and getting reliable performance estimates.

Why Cross-Validation?

The Problem with Train/Test Split

Single split has issues:

- High variance: Different splits give different estimates

- Wastes data: Can't use test data for training

- Lucky/unlucky split: May not represent true distribution

The Solution

Use multiple splits and average:

- More reliable estimates

- Use all data for both training and validation

- Detect overfitting

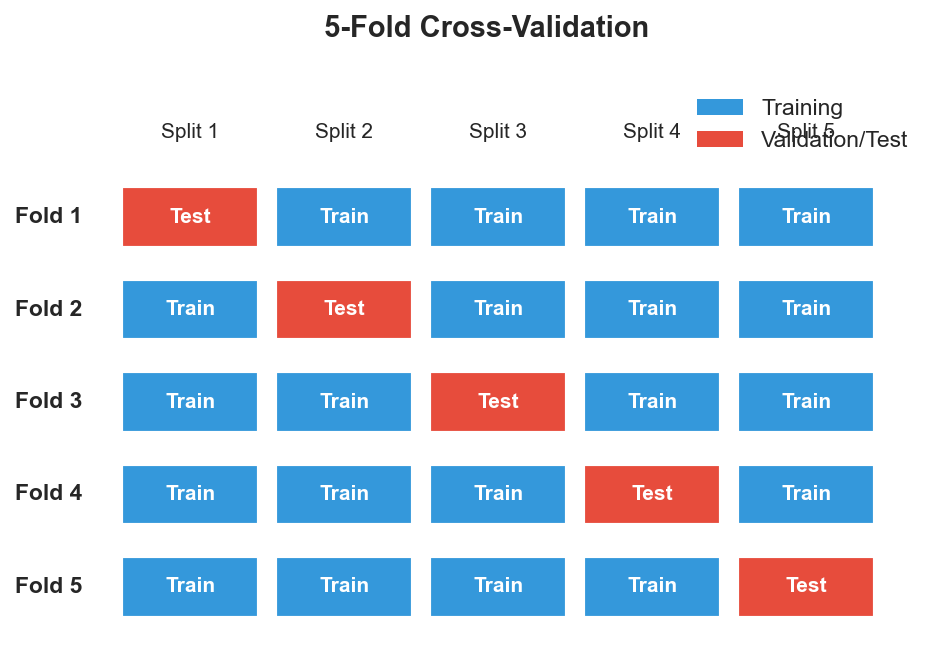

K-Fold Cross-Validation

The most common approach:

Data: [1, 2, 3, 4, 5, 6, 7, 8, 9, 10]

Fold 1: Train [3-10], Test [1,2] → Score 0.85

Fold 2: Train [1,2,5-10], Test [3,4] → Score 0.82

Fold 3: Train [1-4,7-10], Test [5,6] → Score 0.87

Fold 4: Train [1-6,9,10], Test [7,8] → Score 0.84

Fold 5: Train [1-8], Test [9,10] → Score 0.86

CV Score: mean = 0.848, std = 0.018

Algorithm

- Shuffle data (usually)

- Split into k equal folds

- For each fold:

- Use 1 fold as validation

- Use k-1 folds as training

- Train model, compute score

- Average scores across folds

Choosing k

| k | Bias | Variance | Computation |

|---|---|---|---|

| Small (3-5) | Higher | Lower | Faster |

| Medium (5-10) | Balanced | Balanced | Moderate |

| Large (>10) | Lower | Higher | Slower |

Standard choice: k=5 or k=10

Leave-One-Out (LOO)

Extreme case: k = n (sample size)

Fold 1: Train on n-1 samples, test on sample 1

Fold 2: Train on n-1 samples, test on sample 2

...

Fold n: Train on n-1 samples, test on sample n

Pros:

- Maximum training data per fold

- Deterministic (no shuffling)

Cons:

- Computationally expensive (n models)

- High variance estimate

- Rarely better than k-fold

Stratified K-Fold

Preserve class distribution in each fold:

Data: [P, P, P, P, N, N, N, N, N, N] (40% P, 60% N)

Stratified fold 1: [P, N, N] (still ~40% P, ~60% N)

Stratified fold 2: [P, N, N] ...

Essential for:

- Imbalanced classification

- Small datasets

- Multi-class problems

Group K-Fold

Ensure groups don't span train and test:

Patient data: Multiple samples per patient

❌ Wrong: Patient 1 in train AND test → data leakage

✓ Right: Each patient entirely in train OR test

Use when:

- Multiple samples from same source

- Time series from same entity

- Medical data (multiple visits)

Time Series Split

Respect temporal order:

Fold 1: Train [1,2,3], Test [4]

Fold 2: Train [1,2,3,4], Test [5]

Fold 3: Train [1,2,3,4,5], Test [6]

Never train on future, test on past!

Nested Cross-Validation

For hyperparameter tuning without bias:

Outer CV (k=5): Estimate generalization

└─ Inner CV (k=5): Tune hyperparameters

For each outer fold:

1. Use inner CV to find best hyperparameters

2. Train with best params on full outer training set

3. Evaluate on outer test set

Report outer CV scores (unbiased!)

Why nested?

- Single CV for both tuning and evaluation → optimistic bias

- Nested separates model selection from evaluation

Repeated K-Fold

Run k-fold multiple times with different shuffles:

Repeat 1: 5-fold CV → [0.85, 0.82, 0.87, 0.84, 0.86]

Repeat 2: 5-fold CV → [0.84, 0.86, 0.83, 0.85, 0.87]

Repeat 3: 5-fold CV → [0.86, 0.84, 0.85, 0.83, 0.86]

Final: mean ± std of all 15 scores

Reduces variance of estimate.

Cross-Validation Pitfalls

1. Data Leakage

Wrong:

# Scale before split → leakage!

X_scaled = scaler.fit_transform(X)

cv_scores = cross_val_score(model, X_scaled, y)

Right:

# Use pipeline → scaling inside CV

pipeline = Pipeline([

('scaler', StandardScaler()),

('model', LogisticRegression())

])

cv_scores = cross_val_score(pipeline, X, y)

2. Feature Selection Leakage

Wrong:

# Select features on all data → leakage!

X_selected = SelectKBest().fit_transform(X, y)

cv_scores = cross_val_score(model, X_selected, y)

Right:

# Include selection in pipeline

pipeline = Pipeline([

('select', SelectKBest(k=10)),

('model', LogisticRegression())

])

3. Ignoring Groups

If samples are correlated (same patient, same session), use GroupKFold.

4. Wrong Metric

Use the same metric you care about:

cross_val_score(model, X, y, scoring='f1')

cross_val_score(model, X, y, scoring='roc_auc')

Practical Usage

from sklearn.model_selection import (

cross_val_score,

StratifiedKFold,

GroupKFold,

TimeSeriesSplit

)

# Basic k-fold

scores = cross_val_score(model, X, y, cv=5)

# Stratified (default for classifiers)

cv = StratifiedKFold(n_splits=5, shuffle=True, random_state=42)

scores = cross_val_score(model, X, y, cv=cv)

# With groups

cv = GroupKFold(n_splits=5)

scores = cross_val_score(model, X, y, cv=cv, groups=groups)

# Time series

cv = TimeSeriesSplit(n_splits=5)

scores = cross_val_score(model, X, y, cv=cv)

Key Takeaways

- Cross-validation gives reliable performance estimates

- K-fold (k=5 or 10) is the standard approach

- Use stratified k-fold for classification

- Use group k-fold when samples are correlated

- Use time series split for temporal data

- Put all preprocessing inside the CV loop (use pipelines!)

- Nested CV for hyperparameter tuning + evaluation